AI, Meaningful Work, and the Trust Collapse

Artificial Intelligence [AI] is not only threatening work by doing it faster; it is threatening work by making the evidence of work harder to believe.

That is the more demoralizing wound. Not the cartoon version, where a glowing robot strolls into the office wearing a lanyard and takes your chair. The nastier version is quieter. A report appears. A song appears. A blog post appears. A product mockup appears. A clinical summary appears. A student essay appears. A grant proposal appears. Everything has the surface temperature of competence. Everything has paragraphs, transitions, headings, diagrams, tasteful semicolons, and that faintly disinfected prose smell of a hotel corridor after housekeeping has passed through. Yet one begins to suspect that nobody was really there. No mind wrestled the thing down. No one got stuck, circled back, changed position, noticed a contradiction, or paid the small private tax of thinking.

The output exists. But does the work?

This distinction sounds philosophical until it becomes operational. In actual organizations, the bottleneck is rarely the production of artifacts. There has never been a shortage of documents, slides, dashboards, status updates, roadmaps, summaries, or strategy rectangles wearing expensive fonts. The bottleneck is usable consequence on the ground: a broken interface repaired, a patient matched correctly across systems, a workflow made less stupid, a claim routed without ritual sacrifice, a musician finding listeners, a writer earning attention sentence by sentence, a junior engineer learning why the obvious fix is a bear trap with handles.

AI is superb at increasing artifact volume. It is far less reliable at increasing grounded consequence.

That distinction matters because modern work already had a counterfeit-output problem before AI arrived. Many organizations had become temples of performative production. People generated work-shaped objects to survive meetings, satisfy governance, appease compliance, impress leadership, and provide documentary fossils for later blame assignment. AI did not invent this. It merely handed the mimeograph machine to everyone and attached a small jet engine.

A bad human document used to require effort, which at least imposed a speed limit. A bad AI document can arrive instantly, wearing a better tie.

This is where the phrase “AI slop” is useful, though it risks becoming another internet bucket into which everyone throws whatever annoyed them before lunch. Slop is not merely low quality. Slop is output optimized for appearance rather than responsibility. It looks like work from a distance. Up close it has no load-bearing beams. It asks the reader, reviewer, colleague, editor, teacher, engineer, or clinician to perform the missing labor after the fact. The maker saves time. The receiver inherits ambiguity.

In enterprise life this becomes “workslop,” the office cousin of spam, only more polite. A manager receives a strategy memo that says all the right things about transformation, agility, stakeholder alignment, and measurable outcomes, yet contains no hard choices. An analyst sends a synthetic summary of a system she has not inspected. A developer uses AI to produce code that compiles but smuggles in assumptions about data shape, error handling, security, and state. A healthcare architect gets a glossy interoperability plan that mentions Fast Healthcare Interoperability Resources [FHIR, a modern healthcare data exchange standard built around modular resources and implementation guides] as if naming the standard were equivalent to solving identity, workflow, terminology, provenance, and consent.

The result is not productivity. It is displaced cognition.

Before AI, bad work was often visible because it arrived with human fingerprints: hesitations, omissions, awkwardness, misplaced confidence, weird local knowledge, a sentence that revealed the author had actually been in the room. AI removes many of these surface clues. It sands everything to a pleasant finish. The desk looks polished. Unfortunately, the drawers are full of raccoons.

That is why the trust crisis is not a side effect. It is central.

When every artifact may be synthetic, the reader has to ask a new question before asking whether the thing is good: what kind of thing am I looking at? Is this witness, analysis, transcription, synthesis, marketing, hallucination, imitation, or laundering? Was it produced by someone accountable to the consequences? Was it assembled from sources that exist? Was it checked against reality? Did a person understand it, or merely approve its tone?

The old internet had its own cesspools, frauds, plagiarists, search-engine huskers, and content mills. Let no nostalgic fool pretend the past was a monastery with better typography. But the older web still preserved a rough relationship between effort and volume. Publishing at scale required labor, money, templates, farms of underpaid writers, or at least a certain grim dedication to nonsense. Generative AI [GenAI, AI systems that produce text, images, audio, code, or other media from learned statistical patterns] breaks that relationship. The marginal cost of another plausible artifact falls toward zero.

When cost falls toward zero, volume explodes.

When volume explodes, attention becomes more expensive.

When attention becomes more expensive, trust becomes the real currency.

This is brutal for slow human output because human work has a metabolism. A serious essay requires reading, digestion, doubt, revision, boredom, irritation, and occasionally the disgraceful discovery that one’s magnificent first thought was a damp cardboard box. A good piece of music requires taste and exclusion. A useful software design requires contact with failure. A good healthcare data model requires knowledge of what clinicians actually do, what billing requires, what regulators demand, what interface engines deform, and what the source system was never designed to express.

The machine does not need to live through the constraint. It can simulate the residue.

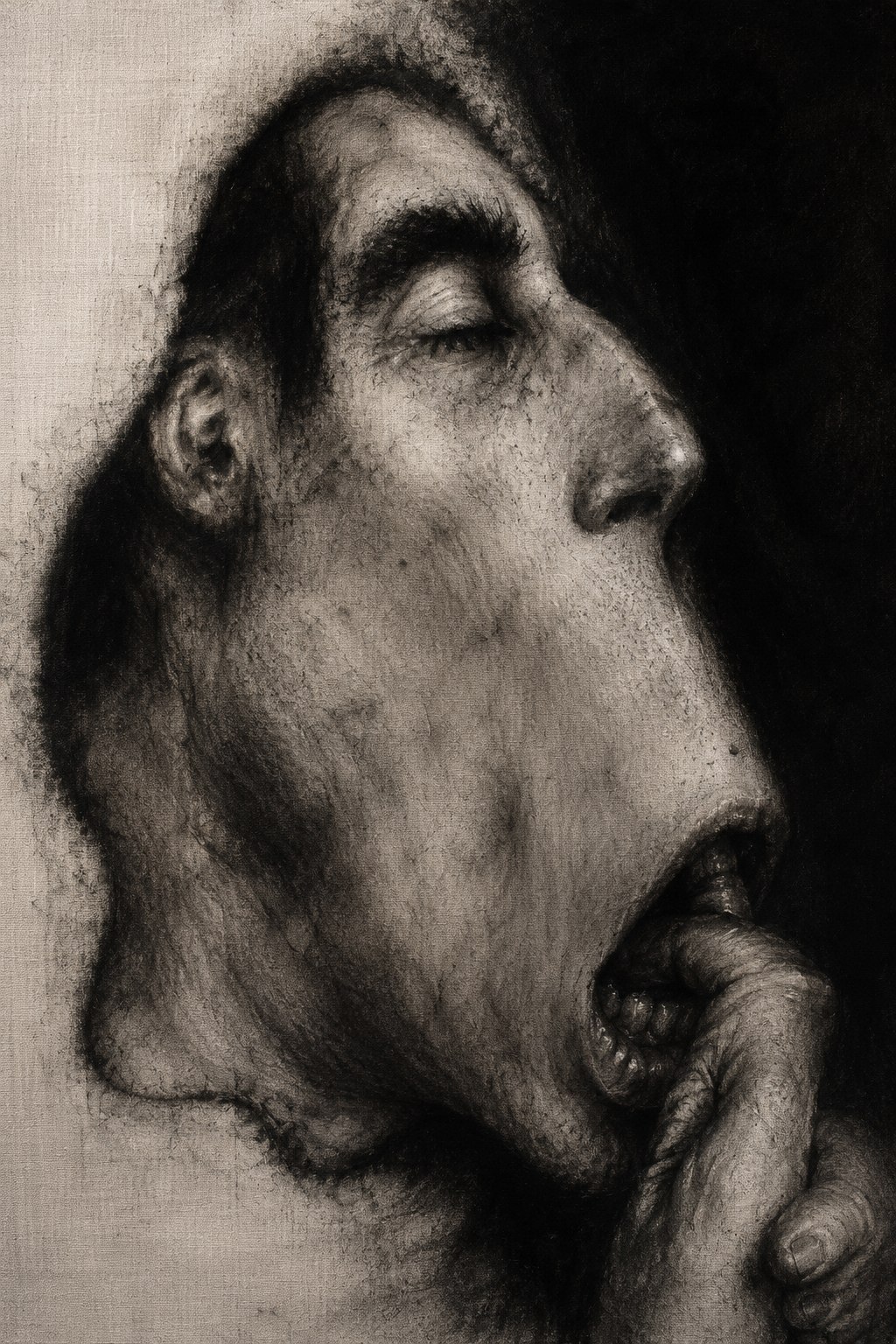

That simulation is the demoralizing part. AI can imitate not only the output but the emotional costume of effort. It can sound weary, wise, technical, lyrical, skeptical, tender, angry, or amused. It can produce a poem about grief without grieving, a song about Calcutta without sweating through a power cut, a healthcare architecture essay without ever having watched a supposedly clean Admission, Discharge, Transfer [ADT, the family of messages that describes patient movement and demographic events in many Health Level Seven version 2 environments] feed turn into an untraceable swamp because three facilities used the same field differently and one interface analyst retired in 2014 with the only living map in his skull.

This does not make AI useless. It makes it dangerous to confuse performance with provenance.

Provenance is the plain old question of where something came from and what happened to it along the way. In healthcare data systems, provenance is not decorative metadata. It is the difference between a fact, a claim, a correction, a copy, a transformation, and a rumor with a timestamp. The same lesson now applies to culture and work. A paragraph without provenance is not necessarily false. But its trust burden has increased. A song without provenance may still move someone. But the listener may wonder whether the emotion came from a person or from a statistical blender trained on ten million stolen sighs.

The moral problem is not that AI cannot create anything useful. Of course it can. The boring anti-AI argument collapses the moment a programmer saves three hours, a dyslexic worker drafts a readable memo, a researcher summarizes papers, a designer explores variations, or a patient gets a clearer explanation of a lab result. The machine can be helpful. Sometimes wonderfully so.

The better objection is that usefulness at the individual task level can still produce degradation at the system level.

A road can help one driver go faster and still create traffic when everyone takes it. Antibiotics can save one patient and still create resistance when misused at population scale. Cheap synthetic output can help one person express a thought and still flood the shared channels until nobody can tell expression from extrusion.

This is the non-obvious architectural insight: AI does not merely automate production; it changes the verification topology around production. In a human-paced system, making was expensive and checking was relatively cheaper. In a machine-paced system, making becomes cheap and checking becomes the scarce function. The burden moves from authoring to authentication, from drafting to review, from creativity to curation, from output to trust infrastructure.

That is a profound shift.

The creative person feels it first as despair. Why write a slow essay when a machine can make a plausible one in seconds? Why compose, draw, code, teach, edit, translate, design, or explain if the public square is filling with synthetic pastiche? Why polish a sentence when the feed rewards speed, volume, novelty, outrage, and the dead-eyed fluency of models that never sleep?

The despair is understandable because the market often does not reward meaning directly. It rewards discoverability, packaging, timing, network effects, platform fit, institutional endorsement, and sheer luck. AI attacks the weak point in that arrangement by producing infinite packaging. It can generate titles, thumbnails, summaries, hooks, slogans, comments, replies, and entire fake neighborhoods of engagement. Slow human work enters the market like a handmade boat crossing a river just as a factory upstream begins dumping inflatable rafts by the million.

Some of the rafts will be useful. Many will be garbage. The river will still clog.

Meaningful work has always depended on more than income. It depends on apprenticeship, difficulty, recognition, identity, and consequence. A junior developer becomes senior by encountering real failure under constraint. A writer becomes better by being wounded by exactness. A clinician develops judgment by seeing cases refuse to behave like textbook paragraphs. A data architect learns when a canonical model should be strict, when it should be tolerant, and when it should admit that the enterprise is not one system but a federation of local truths pretending to be a republic.

If AI absorbs the lower rungs, the ladder does not simply become shorter. It becomes biologically strange. Where do experts come from if beginners are never allowed to be slow, inefficient, supervised, annoying, and wrong? A society that automates apprenticeship may later discover that it has kept the senior title while canceling the process that manufactures seniors.

This is already visible in software, writing, design, journalism, analytics, and even research. The entry-level task was never merely cheap labor. It was the training membrane. It was where people learned taste, judgment, domain texture, and the sacred professional art of noticing when the assignment itself is idiotic. Remove that layer in the name of efficiency and you may get short-term savings followed by a strange hollowing-out. The organization becomes glossy and brittle. It can produce artifacts but not repair itself.

Creative expression faces a related but more intimate damage. Art and writing are not valuable only because they produce objects. They are ways of metabolizing experience. A human essay says, “This is what it felt like to think through this from a particular body, history, fear, education, city, wound, profession, and hour of life.” AI can imitate that sentence. It cannot own the wound. It cannot be embarrassed by its earlier draft. It cannot have wasted ten years, loved a place, fled another, sat alone in a room in Calcutta, or learned that a technical standard becomes real only after some tired engineer maps it to a database designed by a committee of ghosts.

The future value of human work may therefore move toward situatedness. Not just “Is this good?” but “Who stands behind it?” Not just “Is this fluent?” but “What did this person see, risk, test, repair, endure, or understand?” The generic middle will be eaten first because the generic middle was always vulnerable. The safest sentence in the future may be the one that could not have been written by nobody.

This does not mean every human must perform authenticity like a monkey in a waistcoat. That would be another misery. It means the architecture of publishing and work will need stronger signals of human accountability. Verified authorship. Clear AI disclosure. Process notes when appropriate. Source trails. Editorial standards. Smaller trusted networks. Reputation built over time. Technical artifacts tied to implementation evidence. Creative work tied to voice, not merely topic. In healthcare terms, this is provenance, auditability, and semantic governance dragged out of the data warehouse and applied to public culture.

There is a useful distinction here between transport and meaning. Transport moves the artifact. Meaning explains what the artifact is, what it represents, and why it should be trusted. Health Level Seven version 2 [HL7 v2, the older but still widely used messaging standard for healthcare integration] can transport a lab result across an interface. It does not guarantee that the receiving system understands the clinical, temporal, or workflow meaning in the same way. Likewise, the internet can transport a million AI-generated essays, songs, résumés, comments, and product reviews. Transport success is not semantic success. Delivery is not understanding. Availability is not trust.

A great deal of confusion comes from mistaking the first for the second.

Representation failures are often mislabeled as data quality failures in healthcare. The same mistake is now spreading to culture. When a synthetic article feels hollow, the issue is not always “bad content” in the simple sense. It may be a representation failure: the artifact represents expertise without earned expertise, emotion without experience, locality without presence, confidence without accountability, synthesis without judgment. Calling this merely low quality is like blaming a malformed clinical registry entry on sloppy typing when the real problem is that the workflow never captured the phenomenon the registry later demanded.

The machine is often not lying in the human sense. It is representing without inhabiting.

That is why AI detection alone will not save us. Detection is brittle, adversarial, and philosophically messy once human and machine production become entangled. A writer may use AI to brainstorm and still write the essay. A musician may use AI for arrangement but not authorship. A developer may ask AI for boilerplate but personally own the design. A researcher may use AI to summarize papers but perform the real reasoning afterward. The boundary will not always be clean. Demanding purity may become impractical, even silly.

The practical question is not “Was AI involved?” The better question is “Who is accountable for the final meaning and consequence of this artifact?”

That question is harder to automate.

For a serious blog, especially one trying to survive in the new machine-readable swamp, the implication is uncomfortable but clarifying. The answer cannot be to outpublish the machines. They have already won that contest. They do not need tea, sleep, shame, or digestive regularity. The answer is to become more legible as a trusted human source: consistent domain depth, explicit judgment, careful titles, clean metadata, durable URLs, accessible structure, transparent references when factual claims matter, and prose with enough lived grain that it cannot be confused with the beige paste extruded by a thousand prompt farms.

One should use AI, but not become its ventriloquist dummy.

Use it as a draftsman, critic, indexer, translator, adversary, or research assistant. Do not let it replace the hard part: deciding what is true enough, useful enough, earned enough, and yours enough to publish. The human role moves upward, but not into some lazy executive cloud. It moves toward responsibility. Judgment becomes the scarce craft. Taste becomes infrastructure. Slowness becomes a signal, though only when joined to quality. Merely being slow and human is not noble. A tortoise can still wander into traffic.

The clean solution is prevented by a realistic constraint: platforms are economically rewarded for volume, engagement, and enclosure, not for preserving the moral ecology of authorship. Search engines, social feeds, streaming services, marketplaces, and AI answer engines all have incentives to ingest, summarize, rank, remix, and monetize output faster than trust institutions can adapt. Regulation will lag. Labeling will be inconsistent. Detection will fail. Consumers will prefer convenience while complaining about degradation, which is the ancient human arrangement: curse the flood, click the boat.

So the remaining architectural direction is partial, defensive, and stubborn.

Build trust as a first-class layer. Treat human authorship as provenance, not vanity. Preserve process where it matters. Make sources inspectable. Separate drafts from certified outputs. In organizations, do not measure AI success by artifact count. Measure downstream rework, error rates, decision latency, user trust, operational throughput, and whether junior people are still learning the terrain. In creative work, do not chase the machine into infinite generic production. Go narrower, deeper, stranger, more accountable, more situated. Write the thing that requires having lived in your particular nervous system.

AI will take over a great deal of meaningful work if we define work as the production of plausible artifacts. It will take over less if we define meaningful work as accountable transformation under constraint.

That definition is not sentimental. It is architectural.

A system is known by what it preserves under pressure. If AI lets us produce more while preserving less judgment, less apprenticeship, less trust, less authorship, less locality, and less consequence, then the productivity story is a badly labeled dashboard. The numbers may go up while the civilization quietly loses the ability to tell who did what, who knows what, and what any of it is worth.

The gnawing thought keeps returning because it is not merely anxiety about tools. It is a recognition that the shared world depends on friction. Not all friction is waste. Some of it is verification. Some of it is learning. Some of it is taste forming in the dark. Some of it is the human mind refusing to be replaced by its most fluent shadow.